References

・Murayama, Uemura,Toyotani et al., "Determination of Biphasic Menstrual Cycle Based on the Fluctuation of Abdominal Skin Temperature during Sleep", Advanced Biomedical Engineering 12(1) ,p.28-36 2023年2月

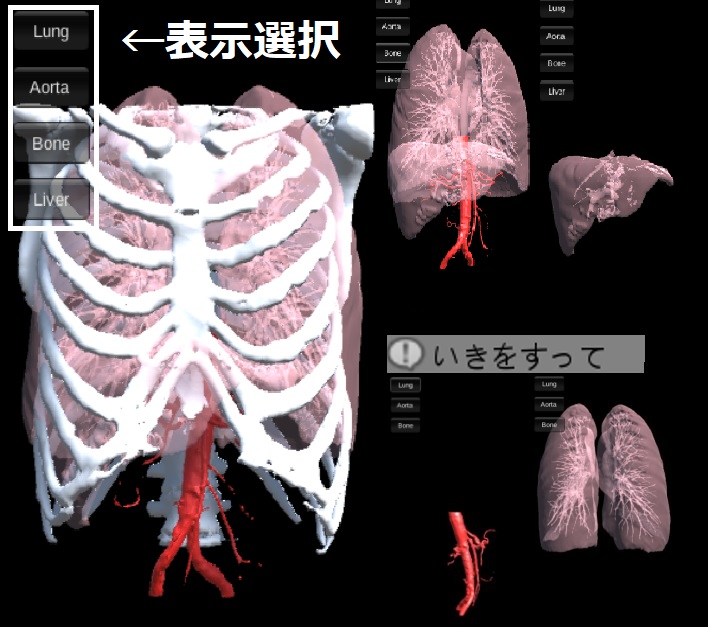

・Miura, Omae, Kakimoto, Toyotani et al.,"Three-State Classification of Pulmonary Artery Wedge Pressure from Chest X-Ray Images Using Convolutional Neural Network", ICIC Express Letters, Part B: Applications ICIC International 2023 14(3) 271-277 2023年3月

・柿本, 大前, 豊谷, 原, 高橋, COVID-19の感染リスクを抑制する飲食店における座席割当モデル", 日本経営工学会論文誌 74(2), p.77-89, 2023年7月

・Yuki Saito, Yuto Omae, Saki Mizobuchi, Hidesato Fujito, Masatsugu Miyagawa, Daisuke Kitano, Kazuto Toyama, Daisuke Fukamachi, Jun Toyotani, Yasuo Okumura, Prognostic significance of pulmonary arterial wedge pressure estimated by deep learning in acute heart failure, ESC Heart Failure, 2022.12. doi: 10.1002/ehf2.14282

・大前 佑斗, 柿本 陽平, 齋藤 佑記, 深町 大介, 永嶋 孝一, 奥村 恭男, 豊谷 純,Wasserstein距離による特徴マップの異常性スコアを基準としたCNN特徴量の次元削減,電子情報通信学会技術研究報告(MEとバイオサイバネティックス) 122(291) 29-31 2022年11月

・Yuto Omae, Yuki Saito, Yohei Kakimoto, Daisuke Fukamachi, Koichi Nagashima, Yasuo Okumura, Jun Toyotani, GUI System to Support Cardiology Examination Based on Explainable Regression CNN for Estimating Pulmonary Artery Wedge Pressure, IEICE Transactions on Information and Systems, 2022.12. doi:10.1587/transinf.2022EDL8059

・Yuto Omae, Makoto Sasaki, Jun Toyotani, Kazuyuki Hara, Hirotaka Takahashi, Theoretical Analysis of the SIRVVD Model for Insights into the Target Rate of COVID-19/SARS-CoV-2 Vaccination in Japan, IEEE Access, vol.10, pp.43044-43054, 2022.04. doi:10.1109/ACCESS.2022.3168985.

・Yuki Saito, Yuto Omae, Daisuke Fukamachi, Koichi Nagashima, Saki Mizobuchi, Yohei Kakimoto, Jun Toyotani, Yasuo Okumura, Quantitative Estimation of Pulmonary Artery Wedge Pressure from Chest Radiographs by a Regression Convolutional Neural Network, Heart and Vessels, vol.37, no.8, pp.1387-1394, 2022.02.

・Yuto Omae, Yohei Kakimoto, Makoto Sasaki, Jun Toyotani, Kazuyuki Hara, Yasuhiro Gon, Hirotaka Takahashi, SIRVVD model-based verification of the effect of first and second doses of COVID-19/SARS-CoV-2 vaccination in Japan, Mathematical Biosciences and Engineering, vol.19, issue 1, pp.1026-1040, 2022. doi: 10.3934/mbe.2022047.

Researchmap